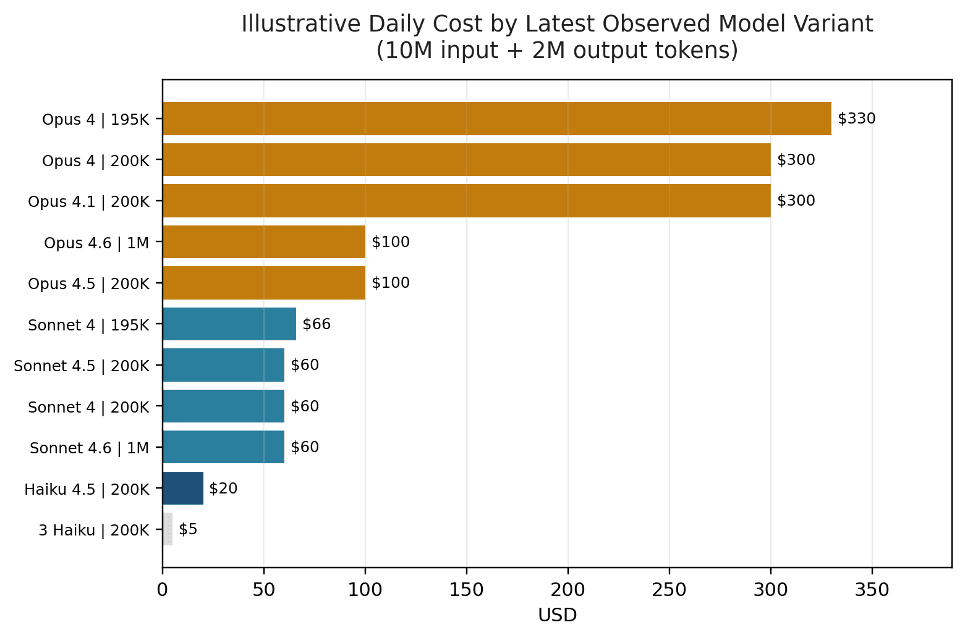

Anthropic will run your agent 24/7 for $58 a month. That’s the trade-press headline on Claude Managed Agents pricing. I took it at face value, built a Managed Agent yesterday, and ran it on the kind of workload I’d actually bill a client for. The 30-day projection came out to $0.42.

If you’re sizing up Claude Managed Agents pricing for a proposal — or negotiating a retainer on top of one — the number that matters is not $0.08 per hour. It’s the shape of your token spend. Specifically, the cache writes on cold calls, which on my measurements cost 240 times more than the runtime fee everyone is quoting.

What Claude Managed Agents Actually Is

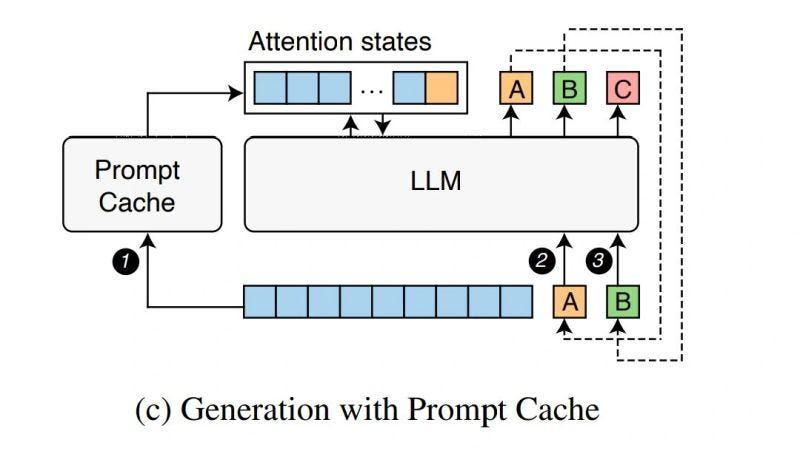

Before the numbers, the product. Managed Agents is Anthropic’s API-driven runtime for running an agent in their cloud. You define three things — an Agent (model plus system prompt plus tools), an Environment (sandboxed container), and a Session (an actual run) — and Anthropic handles orchestration, sandboxing, credentials, and long-running state.

There’s no UI. You trigger runs by hitting REST endpoints, scheduling them with Routines, or firing a webhook from something like n8n. It’s been in public beta since April 8, 2026. Beta access was already live on my production API key — no waitlist, no onboarding call.

What a Real Workload Actually Cost

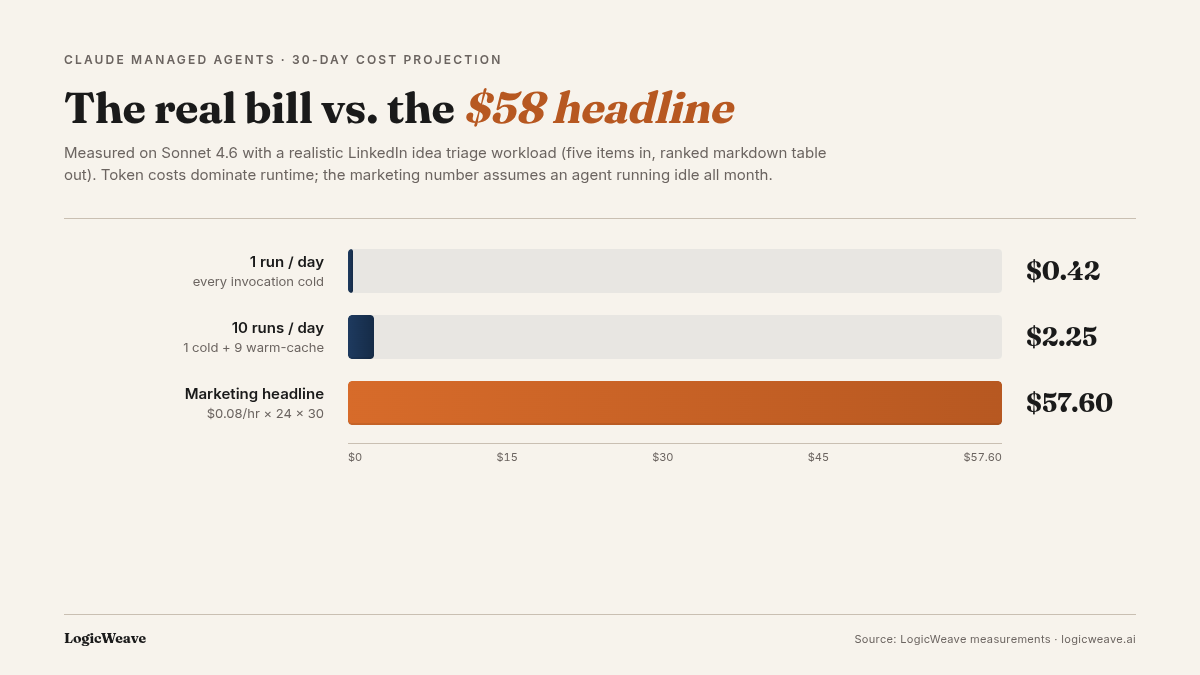

I picked a workload that looks like a recurring consulting engagement: LinkedIn idea triage. The agent gets five post titles, scores each on a rubric, and returns a ranked markdown table. About 300 tokens of output per run. Simple on purpose — I wanted clean numbers, not a tangled scenario.

Here’s what came back from three consecutive runs of the same prompt on Sonnet 4.6, using the client.beta.sessions interface to stream events:

- Call 1 (cold): 3 input / 323 output / 2,070 cache writes / 3,375 cache reads — billed 13.9s — $0.014

- Call 2 (warm): 3 input / 334 output / 0 cache writes / 5,445 cache reads — billed 14.0s — $0.007

- Call 3 (warm): 3 input / 309 output / 0 cache writes / 5,445 cache reads — billed 14.8s — $0.007

The first call was cold. The next two were within the five-minute cache TTL. Cost per call dropped 51% once the cache was warm. Extrapolated across 30 days — even assuming every morning starts cold — a daily version of this agent is 42 cents a month. Ten runs a day costs $2.25. The $57.60 headline describes a scenario no real client actually requests: an agent idling for a month doing nothing.

One trap to flag before you try this: the anthropic Python SDK at version 0.86 doesn’t expose the beta.agents namespace. I got an AttributeError before I got a bill. Upgrade to 0.97 or later. The docs don’t highlight this.

Cache Writes Are the Hidden Bill

The surprise lives in a different usage field. On my first call — a smoke test asking the agent to say “hi” — the session returned 5,165 cache_creation.ephemeral_5m_input_tokens. That’s Anthropic caching the agent toolset definitions on first use. Priced at 1.25× the standard input rate, those cache writes cost $0.019 on a single hello.

Runtime for that same call? 3.8 seconds at $0.08 an hour — $0.00008. Cache writes cost 240 times more than the runtime fee.

Read that again. Every piece of Managed Agents pricing commentary I’ve seen anchors on $0.08 per hour. Nobody is talking about the line item that actually dominated the bill on my first request.

The flip side is the pitch. Once the cache is warm, those 5,000+ input tokens become cache reads at 0.1× the base rate — roughly a cent and a half cheaper per call. On high-frequency or clustered workloads, cache discipline is the biggest single lever on a client’s monthly bill.

Claude Managed Agents Pricing Should Anchor on Cache Discipline

Here’s the retainer frame I’d actually sell to a client:

Your agent’s real cost is the output tokens you generate and the cache hit rate on the rest. I’ll keep your workload under X tokens per month by batching calls into the five-minute TTL, keeping the system prompt lean, and pinning tool definitions that change slowly. That’s the monthly bill we target. The $0.08-per-hour runtime fee is a rounding error.

That’s a durable consulting offer. Setup is a one-time $0. Ongoing value is token-shape optimization — which is invisible from the outside until you measure it. A consultant who runs the numbers can cut a client’s monthly Managed Agents bill in half without changing the model or the prompt. Compare that to switching to a cheaper model, which usually saves less and trades away capability.

What Doesn’t Port Over

A few rough edges to set expectations honestly before you commit.

claude.ai OAuth connectors don’t carry. If you’ve OAuthed ClickUp, Linear, Slack, Supabase, Stripe, or any other connector into claude.ai, those don’t reappear in Managed Agents. Each MCP server has to be registered in the Managed Agents API and credentials stored in a Vault. For most consultants’ existing claude.ai setups, this is the single biggest migration cost.

HTTP or SSE transport only. The stdio MCPs in most repos’ .mcp.json — the ones that shell out to npx or uvx — do not work. You need the provider’s hosted HTTP endpoint. If there isn’t one, you host a shim.

Agents can’t be deleted from the SDK. As of 0.97, agents.delete() isn’t exposed. Agents persist in your account. They don’t bill when idle, but you accumulate test agents fast during iteration.

None of this is a dealbreaker. All of it is worth knowing before you scope a migration. For an adjacent self-hosted stack — with full control over what’s connected — the OpenClaw one-click deploy covers the tradeoffs.

Run the Math on Your Own Workload

The three scripts I used to get these numbers are small enough to read in a sitting. Clone the workload you’re thinking about retaining, point it at Sonnet 4.6, run it three times in a row, and read the cache_creation and cache_read_input_tokens fields off each session. That gives you the cold and warm numbers for your specific prompt shape in about twenty minutes. If you want the scripts somewhere you can tear down between jobs, a disposable cloud VM is the cleanest place to run them.

If the math works in your favor — and for any invocation-driven workload it almost certainly does — the pricing story you bring to a client is built on measurement, not marketing copy. That’s the part of the offer no competing consultant can bluff.

FAQ

Is Claude Managed Agents pricing ever cheaper than DIY on the Agent SDK?

Token costs are identical — you pay the same model rates either way. Managed Agents adds $0.08 per active session-hour. For any invocation-driven workload under a few hundred hours per month, that fee is smaller than the engineering time DIY would take to maintain retries, credentials, sandboxing, and cache headers yourself.

How much does prompt caching actually save on a Managed Agent?

On a trivial workload, warm cache cuts per-call cost by about 70%. On a realistic workload with roughly 300 tokens of output, I measured 51% savings per call. The savings shrink as your output grows because output tokens can’t be cached.

Do I need to apply for beta access to use Claude Managed Agents?

No — beta access was already enabled on my production Anthropic API key with no waitlist or onboarding call. Upgrade the anthropic SDK to 0.97 or later and the beta.agents namespace is available. The older 0.86 release silently fails with an AttributeError.