They All Passed. Every Single One.

The internet spent last week arguing about benchmark decimals. I spent it running GPT-5.4 vs Gemini 3.1 vs Claude Opus 4.6 on my actual work (and yes, I tested their memory systems too) — building CLI tools, analyzing a real business decision I’m facing.

All three produced working code. All three gave solid strategy advice. All three ran on the first attempt.

We’re two weeks past GPT-5.4’s launch and people are still fighting about 52.7% vs 50.3% on SWE-bench. Meanwhile, in practice, these models have converged. They’re all smart enough.

So I stopped asking “which model is smartest?” and started asking: which one fits how I work?

The Coding Test: Same Prompt, Three Working Tools

I asked each model to build a Python CLI tool — async link checker with progress bar, rate limiting, JSON and markdown output. Real requirements, not a toy problem.

Results:

| Model | Time | Code Size | Runs? |

|---|---|---|---|

| GPT-5.4 | 27.8s | 9,475 chars | Yes |

| Gemini 3.1 Pro | 31.4s | 5,028 chars | Yes |

| Claude Opus 4.6 | 44.1s | 14,202 chars | Yes |

All three tools found the same broken links on my own site. The differences were style, not substance:

- GPT wrote clean, minimal code with stdlib only. No external dependencies beyond aiohttp and tqdm. Fast and lean.

- Gemini produced the most concise version — half the code, still fully functional. Got the job done with zero waste.

- Claude went full-featured — BeautifulSoup, dataclasses, emoji-rich terminal output with a breakdown of working/broken/error counts. It built a tool you’d actually want to use.

None of this tells you which model is “better at coding.” It tells you they have different instincts. GPT is efficient. Gemini is minimal — which is why I use it for log analytics where Claude refuses. Claude is thorough. (That thoroughness is why I built my production workflows on Claude Skills.) Pick the instinct that matches yours. (And it’s not just text models — Adobe Firefly is quietly becoming a serious automation tool too.)

Three Personalities, One Question

This is where it got interesting. I asked all three the same business question — should LogicWeave build a SaaS product alongside consulting? Real context, real numbers, real decision I’m actually facing.

All three gave good advice. But the framing was completely different.

GPT-5.4 opened with: “Do not build a SaaS alongside consulting right now.” Then delivered 15,000 characters of analysis — revenue projections, risk matrices, phased timelines. It felt like reading a McKinsey deck. Comprehensive, safe, data-forward.

Gemini opened with: “Let’s strip away the startup romanticism.” Half the length. Invented a concept called the “Byproduct SaaS” strategy — build internal tools for consulting, then spin out the most validated one. Creative and concise.

Claude opened with: “You’re asking the wrong question.” Challenged the entire premise before answering. Called out that most solo founders lie to themselves about their capacity. Gave an “honest SaaS timeline most people don’t want to hear.” It was uncomfortable and useful.

Same data. Same question. Three distinct personalities:

- GPT is your analyst. Complete picture, every angle covered. Data-forward, comprehensive, safe.

- Gemini is your advisor. Half the length, twice the insight. Cuts straight to what you should do.

- Claude is your co-founder. Challenges your premise before answering. Uncomfortable but useful.

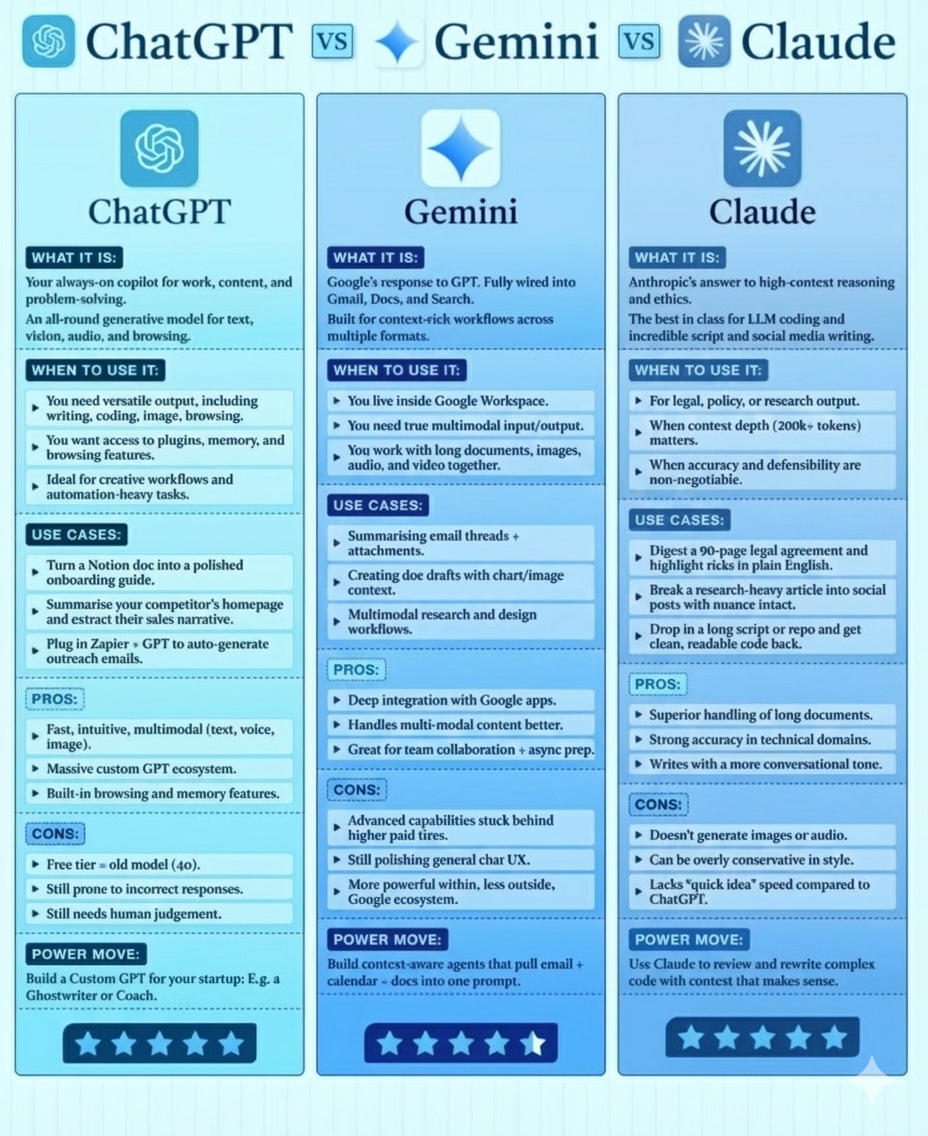

Ecosystems, Not Benchmarks

The model is maybe 20% of my daily experience. The ecosystem around it — the tools, integrations, and workflow — is everything else.

Claude is built to work. 120+ skills, MCP servers, Agent Teams, computer use. The deepest customization ecosystem for sustained work. I have custom slash commands that automate my entire content pipeline, proposal writing, code review, even this blog post. MCP servers connect it to ClickUp, Supabase, Google Workspace, and mem0. When I need something built, Claude is where I go.

GPT is built to respond. Most polished conversational UX. I still use it for quick API calls — mostly because I learned their SDK first. GPTs and Projects offer customization, but it’s shallow compared to Claude’s skills system.

Gemini is built to live where you already work. My company runs on Google Workspace. Gemini is already in my Calendar, Drive, and Gmail. Native video understanding and image generation are capabilities neither competitor matches. Cheapest frontier API pricing.

What I Actually Use Each For

I use all three. Every day. Different tools for different jobs.

- Claude Opus 4.6 — Daily driver. Coding, agents, content, proposals. Anything multi-step with tools.

- GPT-5.4 — Small API requests. I’ve used their SDK the longest, so it’s muscle memory for quick calls.

- Gemini 3.1 Pro — Google Workspace automation, batch processing, image generation, video understanding.

I don’t pick a model because I’m loyal to a company — I pick it because it’s the fastest path to done.

Stop Chasing Benchmarks

If you’re still picking your AI model based on who scored 2% higher on MMLU, you’re optimizing the wrong variable.

Try all three on one task you actually do this week. Not a benchmark. A real piece of your workflow. You’ll know which one fits before you finish the task.

The model wars are over. The ecosystem wars are just beginning.

FAQ

Which AI model is best for coding in 2026?

All three frontier models (GPT-5.4, Gemini 3.1 Pro, Claude Opus 4.6) produce working code on first attempt. Claude Opus 4.6 leads for agentic, multi-step coding with its Skills system, Agent Teams, and computer use. GPT-5.4 is fastest. Gemini 3.1 Pro has the cheapest API pricing. Pick based on your workflow, not benchmarks.

Is GPT-5.4 better than Claude Opus 4.6?

GPT-5.4 is faster and has the most polished conversational UX. Claude Opus 4.6 is better for agentic work, sustained multi-step tasks, and has the deepest customization ecosystem (120+ skills, MCP servers, Agent Teams, computer use). Neither is universally better — they excel at different things.

What is each AI model best at?

Claude is your daily driver for coding and complex multi-step work. GPT has the most mature SDK ecosystem for API integrations. Gemini lives where you already work — Google Workspace, cheapest frontier pricing, and native video and image capabilities. The right choice depends on your workflow, not benchmark scores.